Artificial neural networks

A framework for building machine learning algorithms that is inspired by the brain.

Big data

Data that cannot be efficiently analysed using conventional means, typically because of its volume, veracity, velocity, and/or variety.

Convolutional neural networks

A particular type of 'deep learning' that is adept at dealing with images, speech and text.

Deep learning

An approach using very complex, multi-layered 'artificial neural networks' that require large amounts of training data but can perform very complex tasks such as image labelling, like identifying cars in a photograph.

Exploratory data analysis

The preliminary investigations of a data set in order to better understand its characteristics.

Feature selection

Or 'feature engineering'. The process of selecting inputs to an algorithm and how these inputs should be represented. For example, if we are trying to create an algorithm to predict how many free seats there are on a train journey – what is the best set of information about the journey, is it start time, end time, date, starting station, destination station, what colour the train is, or the weather?

Genetic algorithm

A process that mimics natural selection, where a solution evolves through the mixing, or ‘breeding’, and ‘mutation’ of a set of potential solutions. Most often used in robotic problems or problems where there are a large number of good solutions, and we are trying to find the best from these.

Hashing

A mathematical process that takes data of any size and maps it to data of a fixed size. The process is generally difficult to reverse and is most commonly used in the storage of sensitive data, such as passwords or in index structures. Normally seen in action turning passwords like ‘Hunter2’ into ‘*****’.

Index

A structure that allows the efficient location of a piece of information in a data store.

Jupyter notebook

A document that contains live code, analysis and descriptive text, allows sharing and collaboration around a data analysis task.

Kolmogorov-smirnov test

A mathematical approach to analysing two datasets to determine if they have equal distributions. Helps with understanding whether two groups in an experiment show different responses to a stimulus.

Logistic regression

A model that typically predicts a binary outcome (e.g. true/false) from one or more continuous inputs, such as predicting whether someone will repay a loan based on their income.

Machine learning

The field of study dealing with algorithms and models that improve their performance as they are provided with more data. This improvement continues until overfitting occurs and maximum performance has been reached.

Natural language processing

A field of study which attempts to train machines to understand and analyse human languages, contributing to applications such as automated customer services assistants on websites.

Overfitting

The scourge of modern data science generally occurs when a system has been ‘overtrained’ on a training data set and cannot generalise to data to which it has not been previously exposed.

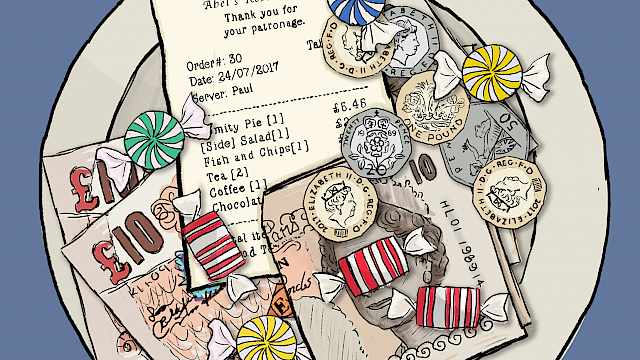

Privacy

The expectation that personally identifiable information or other sensitive data will be treated securely and sensitively. Getting value from data whilst respecting the privacy of data subjects is the cornerstone of modern data protection laws.

Qualitative data

Data that are non-numerical in form.

R 'R'

A leading free software environment widely used for data analysis tasks.

Supervised learning

The process of learning from a set of labelled data. Typically used in classification techniques where we want to sort inputs into a number of different classes (e.g. email spam/not-spam).

Trust

Within data science the perception of the credibility of a piece of data, a data source, a data processing system or a prediction.

Unsupervised learning

The process of learning where no previous data is used. Typically used in clustering techniques where we wish to group input data to a number of groups that exhibit similar characteristics, such as grouping movies into genres on a streaming platform.

Visualisation

The process for communicating complex information typically through imagery.

Word embeddings

A set of statistical 'natural language processing' techniques where words are allocated a vector of numbers. This vector of numbers effectively encodes the ‘meaning’ of the word. Machines can then use these vectors to better ‘understand’ a corpus of text.

X-Axis

The horizontal axis on a graph also called the abscissa – a term used at least since the 13th century by Leonardo of Pisa.

Yottabyte

One septillion bytes or 1 trillion Terabytes – about 200,000 trillion photos of Kim Kardashian!

Zipf’s law

A feature of all-natural languages where the most frequent word will occur approximately twice as often as the second most frequent word, three times as often as the third most frequent word, etc.

Copyright Information

As part of CREST’s commitment to open access research, this text is available under a Creative Commons BY-NC-SA 4.0 licence. Please refer to our Copyright page for full details.