Orwell’s famous novel, 1984, describes a totalitarian government in which the party in power manipulates the minds of its citizens through perpetual war, government surveillance, propaganda, and aggressive police, and demands that they abandon their own perceptions, memories and beliefs in favour of party propaganda.

In this dystopian nightmare, people are forced against their will to adopt the beliefs of the ruling party. However, modern research in political science, psychology, and neuroscience suggests that people are often quite willing to adopt the (mis)beliefs of political parties and spread misinformation when it aligns with their political affiliations.

The party told you to reject the evidence of your eyes and ears. It was their final, most essential command. – George Orwell, 1984

While it is widely accepted that identification with a political party – or partisanship – shapes political judgments such as voting preferences or support for specific policies, there is now evidence that it may shape belief in more elemental information. For example, US Democrats and Republicans disagree on scientific findings, such as climate change, economic issues, and even facts that have little to do with political policy, such as crowd sizes. These examples make it clear that people can ignore their own eyes and ears even in the absence of a totalitarian regime.

The influence of partisanship on cognition is a serious threat to democracies because they assume that citizens have access to factual knowledge in order to participate in public debates and make informed decisions in elections and referenda. If that knowledge is biased, then the resulting decisions made by citizens are likely to be biased as well. Worse, there are reasons to believe that this knowledge can be actively and voluntarily distorted in order to shape the outcome of certain democratic processes.

For example, the UK Prime Minister, Theresa May, has publicly accused Russia of ‘planting fake stories’ to ‘sow discord in the West’ and suggested that fake news (spread by Russia) has influenced several national elections in Ukraine, Bulgaria, France, and the US, as well as the Brexit campaign. Likewise, roughly 126 million Americans may have been exposed to Russian trolls’ fake news on Facebook during the 2016 US Presidential election. This stresses the scope and consequences of political misinformation.

An identity-based model of political belief

We recently developed a model to understand how partisanship can lead people to value party dogma over truth. Because identification with a political party is a voluntary and self-selected process, people are usually attracted to parties that align with their personal ideology. Political parties are also social groups that generate a feeling of belonging and identity – similar to fans of a sports club.

Indeed, neuroimaging research has found that the human brain represents political affiliations similarly to other forms of group identities that have nothing to do with politics. As such, identification with a political party is likely to activate mental processes related to group identities in general.

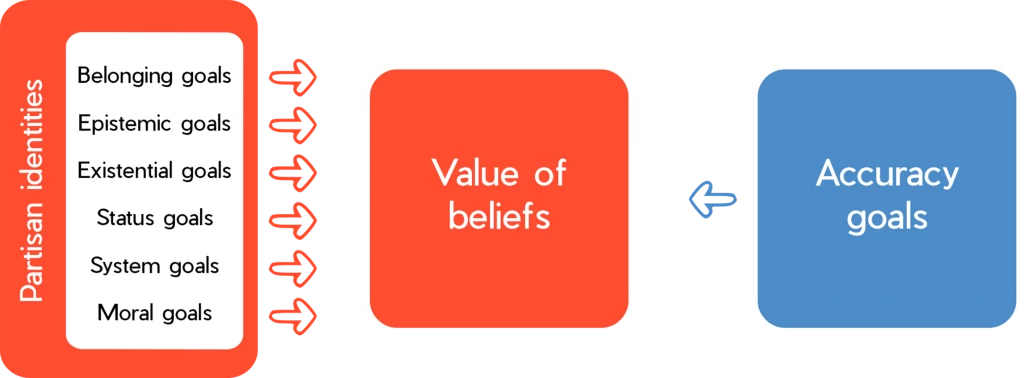

Social groups fulfil numerous basic social needs such as belonging, distinctiveness, epistemic closure, access to power and resources, and they provide a framework for the endorsement of (moral) values (cf. Figure 1). Political parties fulfil these needs through different means. For example, political rallies and events satisfy belonging needs; party elites and think tanks provide policy information; party members model norms for action; electoral success confers status and power; and party policy provides guidance on values.

Because partisan identities can fulfil these goals, they generate a powerful incentive to distort beliefs in a manner that contradicts the truth. Similar to a tug of war, when these identity goals are stronger than our accuracy goals, they lead us to believe in fake news, propaganda, and other misinformation. In turn, these beliefs shape political attitudes, judgements, and behaviours.

The importance of each goal varies across individuals and contexts. When our accuracy goals are more important than the other goals, we will be more likely to arrive at accurate conclusions (insofar as we have access to factual information). Conversely, when one or more identity goals outweigh our accuracy goal, we will be more likely to distort our beliefs to align with the beliefs of our favourite political party or leader. When party beliefs are factually correct, our identity goals will generate accurate beliefs; but when party beliefs are incorrect, our identity goals will lead us to false beliefs.

This process is likely intensified when competing political parties threaten moral values and access to resources since these factors increase group conflict. Political systems dominated by two competing groups, like the Labour and Conservative parties in the UK, may heighten partisan motives because they are particularly effective at creating a sense of ‘us’ vs. ‘them’.

How can we reduce biases related to partisanship?

To reduce partisan bias, our model suggests that interventions should either fulfil social needs that drive partisanship or increase the strength of accuracy goals. To make this effective in a political context, policymakers need to first determine which goals are valued by an individual and then aim to fulfil those goals. For example, when people are hungry for belonging, interventions should either affirm a feeling of belonging or make other social groups available or salient to each individual.

When trying to correct a false belief, one risks threatening the target’s identity or revealing a gap in their knowledge, creating a feeling of uncertainty that is highly aversive. For instance, one study found that simply denying a false accusation did not change beliefs. However, denying the accusation while also providing an alternative explanation for the event did. Thus, an effective way of correcting people's beliefs about false news might be to enrich the corrective information in order to provide a broader account of the news.

Another strategy is to enhance accuracy goals. This can be done by activating identities associated with this goal, such as scientists, investigative journalists, or simply the identity of someone who cares about the truth. Another possibility is to incentivise accuracy or accountability. For instance, incentives and education that foster curiosity towards science, accuracy, and accountability can reduce partisan bias. Interacting with counter-partisan sources or being made aware of one’s ignorance about policy details also reduces political polarisation.

Another factor to keep in mind while building interventions is the importance of the source of the message. We know that people resist influence from out-groups. Therefore, interventions should aim at appealing to a superordinate identity that includes all targets of the message – like all British people – or use a trusted source within the targets' political party to deliver the message.

Conclusion

Partisanship represents a threat to democracy. For example, there is evidence that foreign propaganda leverages existing social and moral divisions to drive a wedge between citizens. Social media might exacerbate expressions of moral outrage. Indeed, our research has found that moral emotional language is more likely to be shared on social media, but only within one’s political group – which can lead to disconnected political echo chambers and political polarisation. It is crucial to tackle these issues to ensure a healthy and robust democracy.

Read more

William J. Brady, Julian A. Wills, John T. Jost, Joshua A. Tucker, & Jay J. Van Bavel. 2017. Emotion shapes the diffusion of moralized content in social networks. Proceedings of the National Academy of Sciences, 1–6. Available at: http://www.pnas.org/content/114/28/7313

Mina Cikara, Jay J. Van Bavel, Zachary Ingbretsen, & Tatiana Lau. 2017. Decoding "us" and "them": Neural representations of generalized group concepts. Journal of Experimental Psychological: General, 146 (5): 621–631. Available at: https://goo.gl/9phhRY

Dan M. Kahan. 2016. The expressive rationality of inaccurate perceptions. Behavioral and Brain Sciences, 40: 26–28. Available at: https://goo.gl/84zt7k

Thomas J. Leeper & Rune Slothuus. 2014. Political parties, motivated reasoning, and public opinion formation. Political Psychology, 35(S1): 129–156. Available at: https://goo.gl/g5KJAX

Jay J. Van Bavel, & Andrea Pereira. 2018. The Partisan Brain: An Identity-Based Model of Political Belief. Trends in Cognitive Sciences, 22(3): 213–224. Available at: https://psyarxiv.com/ak642/

Y. Jenny Xiao, Géraldine Coppin & Jay J. Van Bavel. 2016. Perceiving the world through group-colored glasses: A perceptual model of intergroup relations. Psychological Inquiry, 27(4): 255–274. Available at: https://goo.gl/zTjUVg

Copyright Information

As part of CREST’s commitment to open access research, this text is available under a Creative Commons BY-NC-SA 4.0 licence. Please refer to our Copyright page for full details.

IMAGE CREDITS: Copyright ©2024 R. Stevens / CREST (CC BY-SA 4.0)