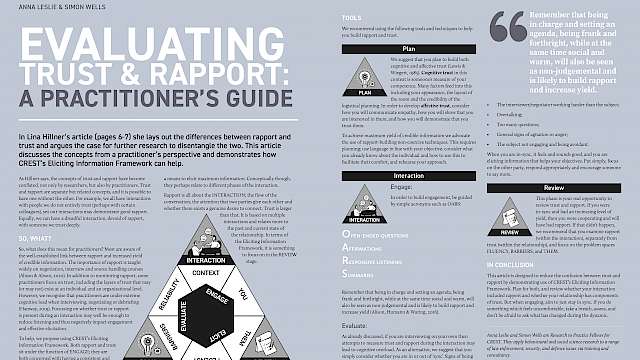

There is a wealth of behavioural and social science research that is relevant to the work of practitioners such as source handlers, police interviewers and negotiators. CREST has published over 100 articles directly relating to interviewing alone. Much of CREST’s other research streams, such as understanding beliefs and ideology, are also relevant to those interviewing suspected terrorists or handling sources who are providing intelligence on terrorist groups. Members of the police and intelligence agencies, alongside academic researchers, have been involved in using such research to train and advise their frontline colleagues for years.

However, there have been problems with the application of the science to practitioners when they are out doing their jobs. Some training sticks well, but some does not. Some behavioural science techniques are easy to apply, others take time and a lot of guidance. In addition, the wealth of available information can even be a blocker to engaging with behavioural science advice. Practitioners do not always know where to start, or how different approaches may support or contradict each other.

In 2016, CREST held a Masterclass in Eliciting Intelligence event for over 50 practitioners. One of the questions asked of the panel was what advice they had on when to use which technique. The panel agreed that this was the next step for the research community, determining which techniques are the most effective with which sort of interviewees and in what sort of context. Whilst that research is essential (especially in the context of agent handling, which is different from police interviewing), we also add value not only by discovering something new but also by packaging it up in a digestible way (Taylor, 2016).

Part of my role as one of CREST’s Research to Practice Fellows is to develop innovative ways to encourage the application of science to our stakeholder’s day jobs. Discussions with our stakeholders a few years ago echoed the question asked in the CREST Masterclass, they wanted:

- Clear advice on when particular techniques should be used.

- Help in bridging the disconnect between the relevant research and the specific issues they were facing with a case.

- To understand how to pull together techniques and research to produce coherent training courses.

The framework

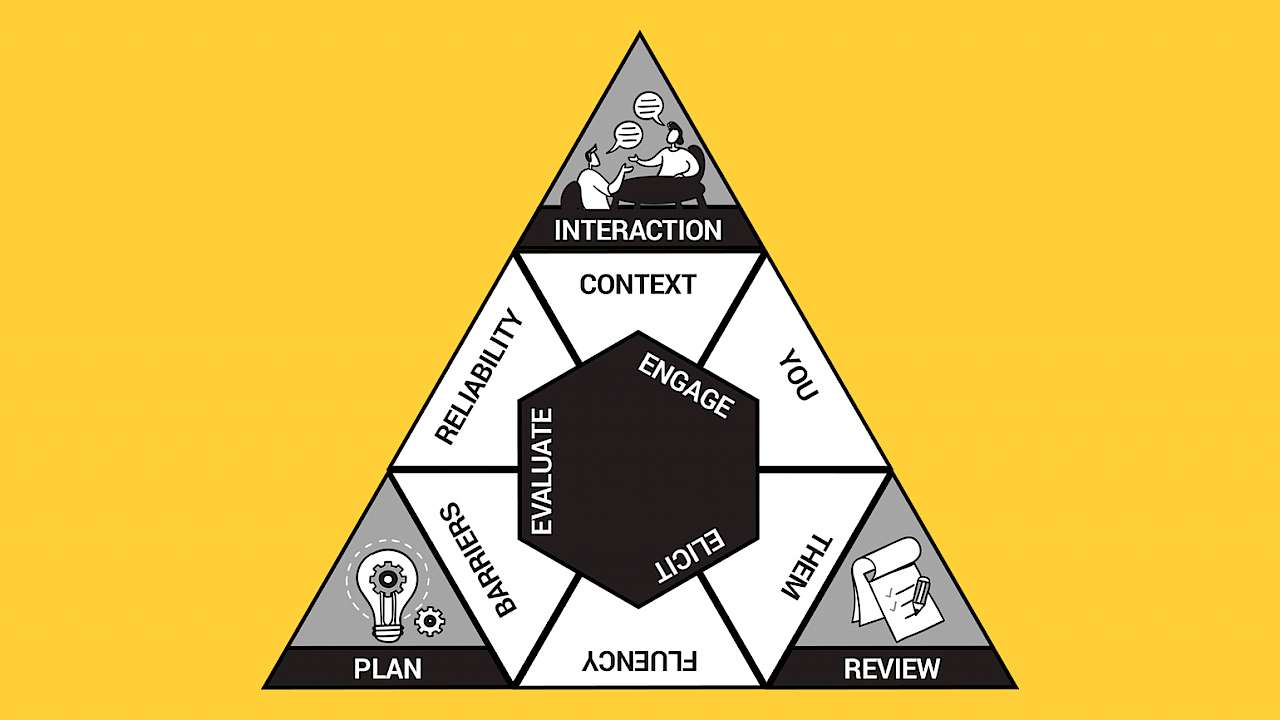

To help with these problems, I designed the Eliciting Information Framework based on a clustering process of existing training material, tools, techniques and research as well as an understanding of the process and decision making that practitioners engage in when interviewing or debriefing others.

This work builds on existing frameworks, such as that published by Brandon, Wells & Seale (2018), but is more flexible in its application. Brandon et al’s science-based approach to interrogations details specific techniques that should be used at different stages of an interrogation, whereas the Eliciting Information Framework groups those techniques together to form categories. This allows for the practitioner to select the most appropriate technique for their needs and also allows the framework to be updated with new research as it become available.

The Eliciting Information Framework is applicable to all practitioners whose role is to elicit information, negotiate, or build relationships with others, whether in a debrief, a more formal interview, or a conversation. It is designed to assist practitioners to better navigate the existing research. It aims to enable them to apply the research more easily to their work, ensuring maximum yield of information and successful relationship maintenance.

The Eliciting Information Framework is applicable to all practitioners whose role is to elicit information, negotiate, or build relationships with others.

It is also a practical tool, designed to give structure to the planning, execution and reviewing phases of an interaction for those leading them and those that offer advice, guidance and training. It provides all practitioners with a shared, evidence-based, mental model and a shared language so they can think as a team to identify potential problems more easily and to reach meaningful solutions aided by behavioural science techniques.

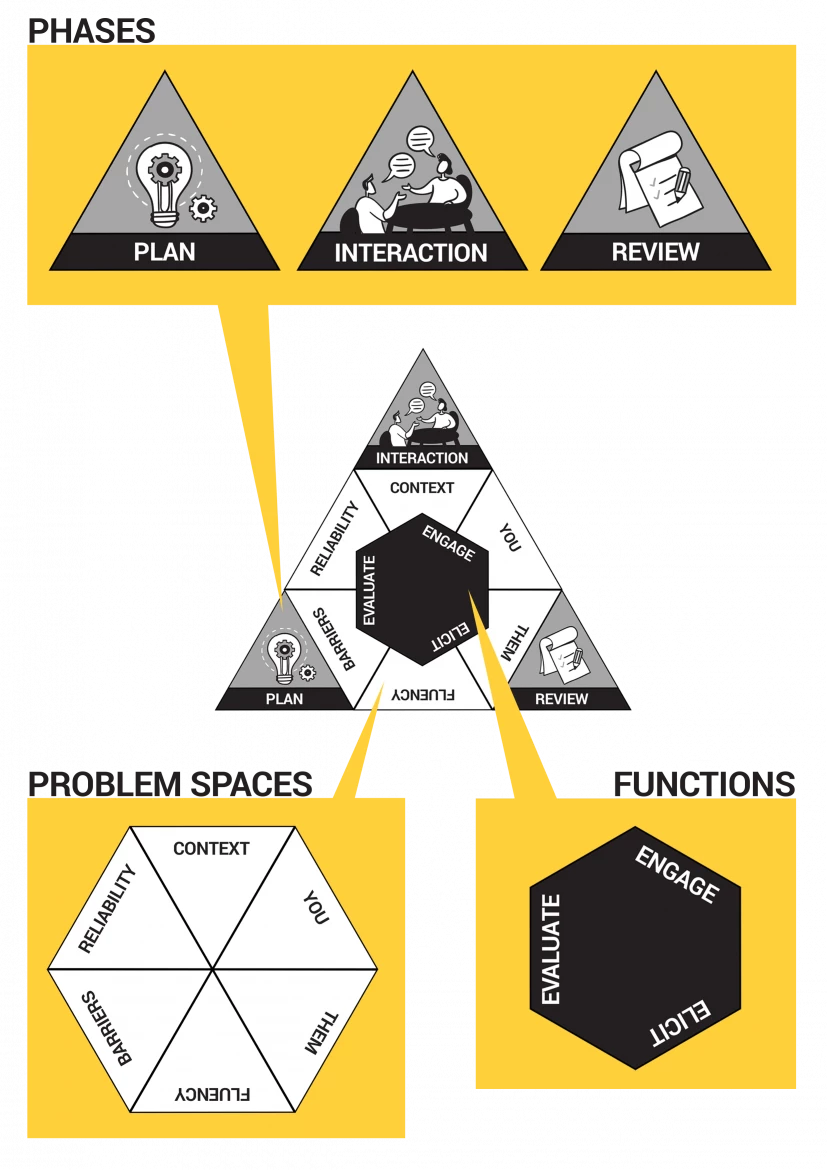

The framework has three core components:

- Phases

- Functions

- Problem spaces

1. The Phases

The phases (PLAN, INTERACTION, REVIEW) are equally as important and should be carried out during every application, but they will vary in the amount of time they take. They are also cyclical, so the decisions made in the REVIEW phase will feed into the PLAN phase next time.

2. The Functions

The functions are what practitioners should be focusing on and thinking about during each phase of the process. These are:

- EVALUATE (monitor in the moment what is happening)

- ENGAGE (build a positive relationship with your contact)

- ELICIT (gain as much credible information as possible)

3. The Problem Spaces

The six problem spaces are areas that practitioners can explore/seek to understand if they have particular issues with their case. These are not exclusive (there is a necessary overlap between them all) but they are exhaustive (there should not be issues that do not fit within these categories).

The problem spaces are CONTEXT, YOU, THEM, FLUENCY, BARRIERS and RELIABILITY.

The benefits of the framework are that it is easy to remember and replicate. It can be used during an interview, debrief or negotiation, as well as when working on a case with others. Behavioural and social science tools and techniques can be mapped onto the framework to assist practitioners with their development (ie learning new skills) and problem solving on particular cases.

For example, all ‘planning’ tools, which help formulate an engagement strategy, can be grouped together. Or, if there is a particular issue with (for example) assessing the credibility of an interview after it has taken place, then tools that are mapped with REVIEW, ELICIT, RELIABILITY may be useful to try.

The framework can be taught early on in someone’s career and continue to be built upon. The shared mental model and shared language should aid decision making and advice delivery.

Evidence base

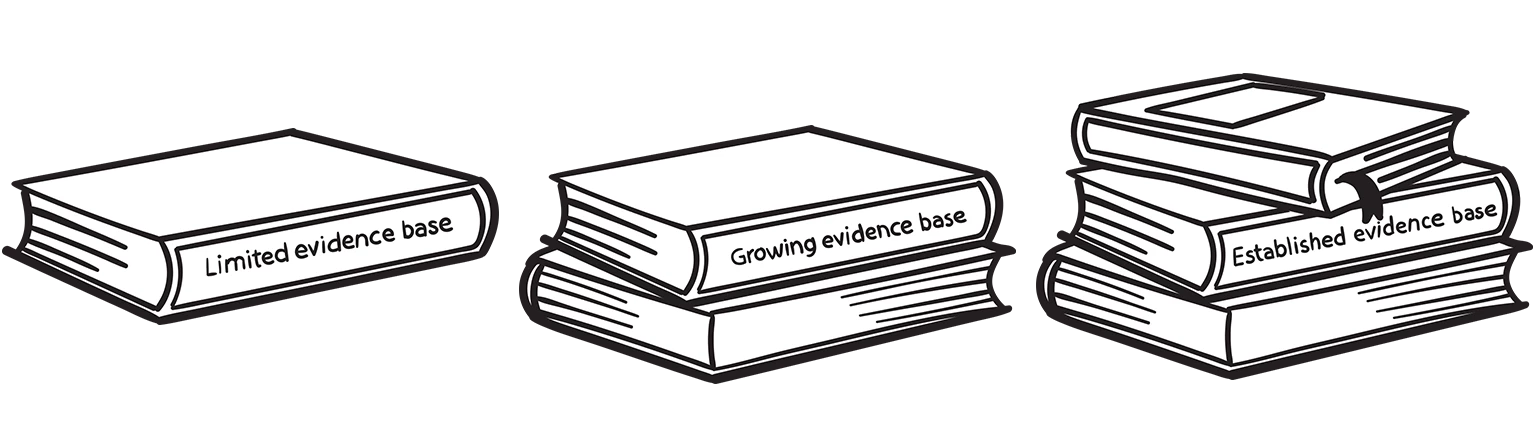

Due to a lack of field validation, it is not always possible to use techniques that are well developed from a scientific perspective in the particular context that many of our stakeholders work in.

However, just because techniques are validated in different contexts, or have only been tested in a handful of experiments, does not render them useless. On occasion, practitioners may need to use techniques derived from a limited evidence base and our use of bookstack icons (below) offer a simple way for them to assess the evidence base on which a technique is derived. These serve only as a guide but should help users make an informed decision on the potential utility of a particular technique.

In application to-date the framework has offered practitioners a useful vehicle for applying research to practice. It requires constant updating to ensure it pulls on the latest and best research, and training delivered by people with an understanding of both the problem area and the research. Early feedback has suggested the framework has the potential to play a positive and transformative role in how practitioners apply research and we continue to seek new organisations and teams to help us test and improve its delivery.

Anna Leslie is a Research to Practice Fellow for CREST. She applies behavioural and social science research to a range of law enforcement, security, and defence issues via training and consultancy.

If you are interested in hearing more about the framework then please email [email protected]

Read more

- Brandon, S., Wells, S. (2019) Science-Based Interviewing. Bookbaby ISBN: 9781543973440

- Brandon, S. E., Wells, S., Seale, C. (2018). Science-based interviewing: Information elicitation. Journal of Investigative Psychology and Offender Profiling, 15(2), 133–148. https://bit.ly/3oWnPfh

- Taylor, P. (2016). The promise of social science. Crest Security Review, issue 1, https://bit.ly/3mGdBwQ

Copyright Information

As part of CREST’s commitment to open access research, this text is available under a Creative Commons BY-NC-SA 4.0 licence. Please refer to our Copyright page for full details.

IMAGE CREDITS: Copyright ©2024 R. Stevens / CREST (CC BY-SA 4.0)